Google's indexing process has undergone a major transformation, leaving new websites struggling to get noticed. Here's what happened:

- Selective Indexing: In 2025, Google stopped automatically indexing all new sites. Instead, it now prioritizes content based on user engagement and quality.

- AI Content Crackdown: Sites relying on AI-generated content saw traffic drop by 50–80% due to stricter algorithm updates targeting low-quality, mass-produced pages.

- E-E-A-T Standards: Expertise, Experience, Authority, and Trustworthiness became critical. Google now verifies author credentials and penalizes sites with unverified or irrelevant authorship.

- Technical Barriers: Poor site structure, duplicate content, and inefficient crawling can block indexing. New sites now face a "probation period" of 3–6 weeks before gaining visibility.

Key Takeaways:

- Content Quality Is King: Google's algorithms now prioritize original, user-focused content over sheer volume.

- Build Trust: Verified author profiles and backlinks from trusted sites are essential for indexing.

- Fix Technical Issues: Ensure proper use of canonical tags, robots.txt, and internal linking to avoid indexing delays.

For site owners and SEO professionals, the focus has shifted to proving value and building trust from day one. Tools like IndexMachine can automate indexing tasks, but a hands-on approach to content and credibility remains crucial.

Google December 2025 Core Update: SEO is Finally Over?

Why Google Stopped Indexing New Sites

Google's approach to indexing new websites has shifted dramatically due to three main factors: changes in algorithms, stricter E-E-A-T standards, and technical hurdles. Let's break these down to understand what's happening.

AI-Driven Quality Filters and Algorithm Changes

With the explosion of AI-generated content, Google had to adjust its algorithms. By January 2025, 19.10% of top search results included AI-generated content. To manage this influx, Google introduced "retrieval economics" - a system that evaluates whether processing a URL is worth the computational effort. Essentially, if your content doesn't offer new or useful information compared to what's already ranking, it's likely to be ignored or demoted.

The March 2026 Core Update specifically targeted "scaled content abuse", which refers to mass-producing pages aimed at gaming the system instead of providing value. The impact was massive: affected sites saw 60% to 90% drops in organic visibility within just 72 hours. Danny Sullivan, Google's Search Liaison, summed it up bluntly:

"We don't really care how you're doing this content at scale, whether it's AI, automation, or humans. It's going to be a problem".

To enforce these changes, Google deployed 16,000 search quality raters who reviewed results and trained machine learning models to detect low-quality AI content.

Greater Emphasis on E-E-A-T and Content Quality

Google has also made E-E-A-T (Expertise, Experience, Authority, and Trustworthiness) a central element of its indexing process. Indexing is no longer automatic; it's now a privilege that new sites must earn. If a website lacks credibility, it often remains in the "Discovered – not indexed" category until it proves its expertise and reliability. By 2026, Google began verifying author credentials against professional registrations and LinkedIn profiles before granting visibility.

This approach has yielded clear results. For example, a medical website increased its daily organic visits from 12,000 to 48,000 - a 400% jump - simply by adding detailed author pages with MD credentials, hospital affiliations, and links to PubMed publications. The content itself didn't change; the difference came from verified author signals. On the flip side, sites unable to prove their authors' expertise often find themselves stuck in indexing limbo. Google also penalizes websites that publish at unnatural rates, such as 50 to 500 articles per month, labeling these as suspicious "content velocity patterns".

Technical Problems That Block New Sites

Even with great content, technical issues can prevent new sites from being indexed. Google now differentiates between "Discovered – not indexed" (URLs known but not fetched due to low predicted value) and "Crawled – not indexed" (URLs fetched but rejected due to quality issues). A study of 1.7 million URLs revealed that 88% of non-indexed pages were excluded due to quality problems, not technical errors.

However, technical missteps can still hurt. For instance, bloated page designs with duplicated DOM sections or excessive inline SVGs increase retrieval costs, making Google less likely to crawl your site. Improper use of the Indexing API - meant only for JobPosting or BroadcastEvent data - can also lead to spam penalties across your domain.

Google has also moved to quality-weighted crawling, where crawl frequency depends on signals like domain trust, E-E-A-T factors, and brand recognition. New sites with little to no traffic often fail to trigger the "crawling loop" because they lack behavioral signals like clicks and dwell time, causing Google to deprioritize them.

How This Affects Website Owners and SEO Professionals

Recent changes in Google's indexing process have created a challenging landscape for website owners, especially those launching new sites. While well-established websites continue to enjoy strong visibility, newer domains face significant barriers to gaining traction. The shift from automatic indexing to a system based on trust and merit has redefined how websites grow and how SEO professionals need to adapt their strategies.

Problems Facing New Websites

New websites now encounter what experts refer to as a "probation period." During this time, Google cautiously samples and minimally indexes content before deciding if a site deserves broader visibility. For domains launched in 2026, this initial indexing phase typically takes between 3 to 6 weeks. Achieving stable crawling and gaining "normal trust" can extend this process to 3 or 4 months. This creates a frustrating paradox: without indexing, it's nearly impossible to attract the traffic needed to demonstrate the site's value.

The aggressive de-indexing processes in recent years further illustrate these challenges. Even technically sound websites can struggle. For instance, a regional portal from 2026 with 750 server-side rendered pages saw 96% of its URLs fail to index due to insufficient Information Gain. The site had a staggering 342:13 ratio of "Discovered" versus "Crawled" pages. Unlike in the past, new domains are no longer automatically discovered through registration. Instead, they must send clear signals, such as Search Console submissions or backlinks from established websites, to appear on Google's radar.

These obstacles mean that SEO professionals must rethink their strategies to align with Google's trust-based model.

Changes Required for SEO and Marketing Strategies

To navigate this new reality, SEO and marketing strategies require a complete overhaul. The old method of publishing keyword-optimized content and waiting for Google to index it is no longer sufficient. Instead, SEO professionals need to focus on building trust and credibility from the outset. Mohit Sharma, a Developer and SEO Writer, aptly summarized the situation:

"Indexing is a trust decision. For new sites, Google asks: Is this site legitimate? Is the content original? Is the domain safe?".

This emphasis on trust has led to a shift in content strategy. Following Google's March 2026 core update, 55% of tracked websites experienced ranking fluctuations. Sites that were heavily impacted saw traffic drops of 20% to 35% on average. In response, SEO experts are prioritizing topical authority clusters rather than relying on standalone, high-quality posts. Additionally, sites that feature original research and first-party data have seen their organic visibility increase by an average of 187% after the update.

Author credibility has also become a key factor. Google now verifies the credentials of authors by cross-referencing their information across the web. This makes detailed author pages, including professional affiliations and external validation, a necessity. To get your website indexed by Google fast, many SEO professionals are adopting the IndexNow protocol, which allows them to submit URLs to multiple search engines simultaneously, diversifying traffic sources. While established sites can still achieve indexing within hours, newer sites face a waiting period of at least 2 to 4 weeks.

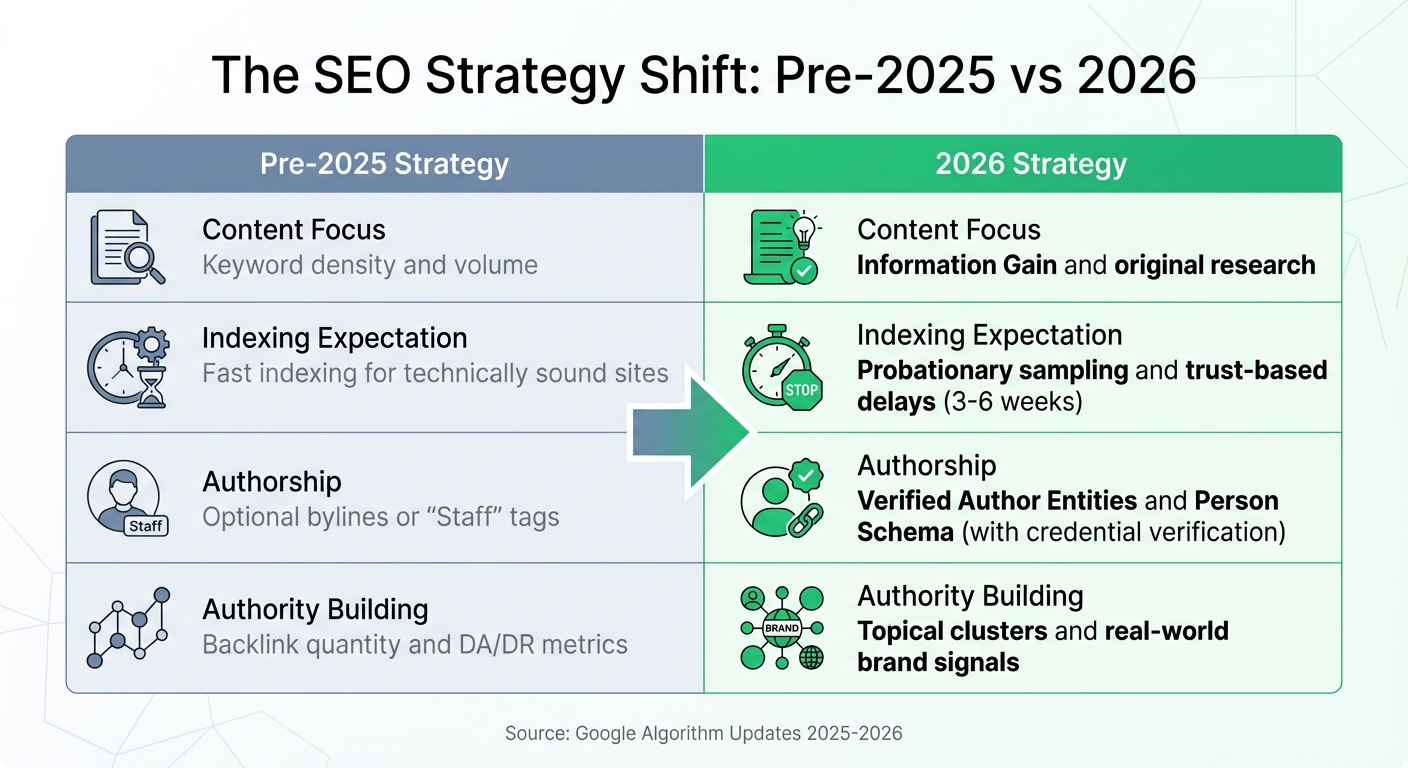

The table below highlights the shift in SEO strategies, offering a clear comparison between past and current approaches:

| SEO Approach | Pre-2025 Strategy | 2026 Strategy |

|---|---|---|

| Content Focus | Keyword density and volume | Information Gain and original research |

| Indexing Expectation | Fast indexing for technically sound sites | Probationary sampling and trust-based delays |

| Authorship | Optional bylines or "Staff" tags | Verified Author Entities and Person Schema |

| Authority Building | Backlink quantity and DA/DR | Topical clusters and real-world brand signals |

These changes underscore the need for a proactive approach to SEO, with a clear focus on building trust and demonstrating value from the very beginning.

How to Get Your Site Indexed Under the New System

Indexing your website in 2026 demands a hands-on, multi-faceted approach that blends technical know-how, strong content, and effective use of Google's tools. The era of passive indexing is gone - site owners must now actively demonstrate value, address technical barriers, and keep a close eye on performance metrics.

Fixing Technical SEO Issues

Technical problems are a frequent reason for indexing failures, even when your content is top-notch. Start by auditing your robots.txt file to ensure you're not unintentionally blocking Googlebot with overly broad "Disallow" rules (e.g., Disallow: /). Similarly, check for noindex meta tags or X-Robots-Tag HTTP headers that could prevent pages from being indexed.

Pay close attention to canonical tags. If these tags point to a different URL, Google treats it as an instruction to skip indexing the current page. Make sure your canonical tags are pointing to the correct URLs. Here's a quick guide to common duplicate content problems and their fixes:

| Cause of Duplication | Common Example | Recommended Fix |

|---|---|---|

| URL Parameters | Tracking codes, session IDs | Use canonical tags or Google Search Console (GSC) parameter handling |

| Server Config | HTTP vs. HTTPS; WWW vs. non-WWW | Set up 301 redirects to consolidate versions |

| E-commerce Variants | Product in different colors/sizes | Use drop-down menus or canonical tags |

| Pagination | Blog/product list pages (/page/2/) | Apply unique titles or noindex tags to deep pages |

Keep your site structure simple - important content should be no more than 3–4 clicks away from your homepage. For JavaScript-heavy websites, use the URL Inspection tool in Google Search Console to confirm that Googlebot can render your pages properly. If key elements are missing in the "View Crawled Page" results, server-side rendering might be your best option.

Simplify redirect chains (e.g., Page A → B → C) into a single direct path (A → C) to save crawl budget and speed up indexing. For immediate updates, consider using the IndexNow protocol, which notifies search engines like Bing and Yandex the moment you publish new content.

Once technical barriers are addressed, shift your focus to boosting your content and link-building strategies.

Creating Better Content and Earning Backlinks

After fixing technical issues, the next step is creating high-quality content and building strong links. Enhance your content with original insights, user reviews, and multimedia elements to make it stand out.

Backlinks and internal links are critical for helping Googlebot find and prioritize your pages. Without these, pages can become "orphans" that are easily ignored. High-value backlinks from trusted websites also signal to Google that your content deserves frequent crawling and better visibility.

Indexed content now plays a key role in AI-generated answers, such as those from tools like ChatGPT or Claude. Strong indexing ensures your content is considered for inclusion in these AI-driven summaries.

Using Google Search Console and Indexing Tools

To ensure your efforts pay off, use Google Search Console to monitor your site's indexing status. A healthy site typically indexes 85–95% of its crawled pages. Check the "Pages" report for issues like:

- "Crawled – Currently Not Indexed": Usually a sign of content quality or intent problems.

- "Discovered – Currently Not Indexed": Points to crawl budget limitations or weak internal linking.

Submit your XML sitemaps and monitor the "Submitted vs. Indexed" ratio. If less than 50% of your submitted pages are indexed, it could indicate a broader content quality issue. Also, check the "Enhancements" section for structured data errors (e.g., for FAQs, products, or videos). Fixing these errors can help your content appear in rich results and AI Overviews.

Here's a real-world example: A SaaS company resolved a "Crawled – Currently Not Indexed" issue by restructuring their content and adding internal links. Within six days, their pages were indexed, leading to a 340% increase in traffic.

For high-priority pages, use the "Request Indexing" feature in Google Search Console. Google allows 10 to 100 manual requests per day, depending on your account. Use this sparingly and only after resolving root issues like robots.txt errors or improving content depth.

When publishing new content, add 2–5 contextual internal links from already-indexed, high-traffic pages. This signals importance to Google right away. Lastly, tackle Core Web Vitals issues by fixing template-level errors, which can resolve problems for multiple pages at once. For real-time indexing, the Google Indexing API can now trigger crawls for any URL type and often reindexes pages in under two minutes.

| Status | Meaning | Primary Cause | Fix Priority |

|---|---|---|---|

| Discovered – Currently Not Indexed | Google found the URL but hasn't visited yet | Crawl budget exhaustion or weak internal links | High - Requires better linking/authority |

How IndexMachine Solves Indexing Problems

In 2026, getting your website indexed is no walk in the park. It involves constant effort - monitoring sitemaps, manually submitting URLs, checking for errors, and repeating the process until your pages are finally indexed. This tedious process is what led to the creation of IndexMachine, a tool designed to automate the entire indexing workflow and eliminate the hassle of manual tasks.

Automated Submission to Multiple Search Engines

IndexMachine simplifies indexing by directly integrating with the Google Search Console API and Bing Webmaster Tools API. Unlike workarounds that Google restricted in 2024, this tool uses official endpoints for reliability. It keeps an eye on your sitemap daily, automatically submitting new pages to Google, Bing, and even LLM crawlers like those used by ChatGPT and Perplexity.

Manual submission can take 2–3 hours for just 100 pages, but IndexMachine handles this on autopilot. It even includes auto-retry logic, ensuring your pages are submitted until indexing is confirmed. This hands-off approach saves time and ensures your content reaches search engines without delays.

Monitoring Indexing Status and Fixing Errors

IndexMachine doesn't stop at submission - it also monitors your pages continuously. It provides detailed insights at the page level, including coverage, crawl dates, and indexing status (whether indexed, in progress, or rejected). The platform's dashboard features visual charts that let you track indexed versus unindexed pages over time, helping you identify trends at a glance.

One standout feature is its real-time 404 alerts. If a page returns a 404 error, IndexMachine immediately notifies you so you can address broken links before they impact your crawl budget or rankings. As user Javier Prasetyo humorously put it:

"The 404 alert feature is actually more useful than the indexing for me lol 😄"

You can also filter pages by status - pending, rejected, or indexed - and even toggle specific search engines on or off for each domain. By tackling these common bottlenecks, IndexMachine ensures a smoother path to better visibility.

Pricing Plans for Different Business Sizes

IndexMachine offers three lifetime pricing plans, all of which can be purchased with the BETA50 discount code:

| Plan | Regular Price | With BETA50 | Domains | Pages | Best For |

|---|---|---|---|---|---|

| SaaS Builder | $25 | $12.50 | 1 | 1,000 | Single site owners, new blogs |

| 5 Projects | $100 | $50 | 5 | 1,000 per domain | Small agencies, side projects |

| Solopreneur | $169 | $84.50 | 10 | 10,000 per domain | Growing businesses, multi-site owners |

All plans include full automation, 404 alerts, daily reports, and submissions to Google, Bing, and LLMs. Google allows up to 20 URLs per day, while other search engines support up to 200 URLs daily. As user Sarah Squires highlighted, lifetime access starting at $12.50 is a fantastic deal.

Conclusion: Working with Google's New Indexing Rules

Google's approach to indexing has evolved from being purely technical to an evaluation steeped in economics. Now, every URL is judged on a "cost-to-value" basis, with Google essentially asking: Does this page bring enough fresh value to justify the resources needed to crawl it? In this environment, simply having a valid sitemap or a fast server isn't enough anymore. Your content has to stand out and genuinely add something new to Google's already massive index.

To navigate this shift, a two-part strategy is essential: cutting out low-value URLs that waste crawl budgets while also boosting credibility and originality in your content. For example, websites that removed 30–40% of thin or duplicate pages during 2025–2026 saw quicker recoveries during core updates. At the same time, those that integrated verified author profiles with professional credentials experienced average visibility gains of 187%. By combining technical improvements with content upgrades, sites can position themselves for better indexing outcomes.

Handling all of this manually - keeping tabs on indexing status, resubmitting rejected pages, and monitoring multiple search engines - can quickly become overwhelming. Tools like IndexMachine simplify the process. It automates tasks like daily sitemap checks, instant resubmissions with retry logic, and real-time alerts for issues like 404 errors. Plus, it provides detailed insights at the page level across platforms like Google, Bing, and even large language model (LLM) crawlers.

The websites thriving in 2026 aren't necessarily the largest or oldest - they're the ones that adapted quickly. They streamlined their site structures to minimize crawl waste, implemented Person schema with sameAs links to verify expertise, and eliminated inefficiencies caused by faceted navigation and parameter variants. Paired with automation tools like IndexMachine, these strategies lay the groundwork for sustainable visibility and long-term success.

FAQs

How can I tell why my pages are 'Discovered' but not indexed?

When Google marks your pages as "Discovered" but not indexed, it means the pages were found but weren't added to its search index. This usually happens because of issues like thin or low-quality content, duplicate content, weak internal linking, or a lack of signals indicating the page is important.

To address this, focus on improving the content to make it more useful and engaging, resolve any duplication issues, strengthen internal linking to highlight the page's relevance, and use the URL Inspection tool in Google Search Console to request reindexing.

What proof of E-E-A-T helps a brand-new site get indexed?

To strengthen indexing, it's crucial to demonstrate Experience, Expertise, Authoritativeness, and Trustworthiness (E-E-A-T). This can be achieved by:

- Highlighting author credentials to showcase expertise.

- Publishing original research or verified, fact-based information.

- Building topical authority through consistent, in-depth content on a specific subject.

These elements became even more critical after Google's December 2025 update, which prioritized high-quality, authoritative content as key ranking signals.

How do I get indexed faster during Google's 3–6 week probation period?

To get your site indexed faster during Google's 3–6 week probation period, prioritize both technical optimization and strong content. Start by using Google Search Console's URL Inspection Tool to request indexing for your pages. Address any issues like crawl errors or problematic noindex tags that might block Google's access.

Another effective step is to create internal links from high-authority pages on your site to your new pages. This helps Google navigate and prioritize your content. Additionally, submitting an updated XML sitemap through Search Console can guide Google to your new pages more quickly.